WHY RELATIVITY OUGHT TO FAIL AT HIGH ENERGIES.

Why Relativity Is Not That Relative: A Theory Of Velocity?

***

Main Idea: Theoretical triage on Special Relativity leaves the theory in shambles at high energies. A precise mechanism to blow up the theory is produced which may cause Faster Than Light (FTL).

***

Abstract: I don’t know whether neutrinos go faster than light or not. The OPERA neutrino anomaly rests on some guess work on the shape of long neutrino pulses, so its superluminal results may well be a mirage. (The experience will soon be run with very short pulses.)

However neutrinos may go Faster Than Light. Why not? Because the idea has caused obvious distress among many of the great priests of physics?

In science one should never suppose more than necessary, nor should one suppose less than necessary. Faith is necessary in physics, but it should reflect the facts, and nothing but the facts. Such is the difference between physics and superstitious religion.

There are reasons to doubt that the leanness of Relativity reflects appropriately the subtlety of the known universe. This is what this essay is about.

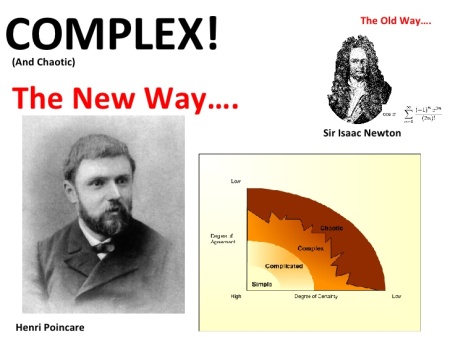

OK, I agree that the equations of what Henri Poincare’ named “Relativity”, seem, at first sight, to lead one to doubt Faster Than Light speeds. Looking at those equations naively, and without questioning their realm of validity, it looks as if, as one approaches the speed of light, one gets infinitely heavy, short, and slow.

However, adding as an ingredient much of the rest of fundamental physics, I present below an argument that those equations ought to break down at very high energies.

As my reasoning uses basic “Relativity” (Special and General), plus basic Quantum physics, one may wonder why something so basic was not pointed out before.

The answer is that, just as in economics most people are passengers on the Titanic, in cattle class, so it is in physics.

Most people have a quasi religious approach to “Relativity”, and their critical senses have been so stunted, that they even fail to appreciate that Einstein did not invent “Relativity” (as I will perhaps show in another, gossipy essay). This is of some consequence, because Einstein had a rather shallow understanding of some of the concepts involved (as he proved aplenty in his attempts at a Unified Field Theory… Pauli called them “embarrassing“… well, may be, my dear Wolfgang, all of Relativity was embarrassing, another collective German hallucination…)

The logic of the essay below is multi-dimensional, but tight. As it throws most of relativity out, I am going to be rather stern describing it:

1) At high energies, absolute motion can be detected. This renders Galileo’s Relativity, and its refurbishment by Poincare’, suspicious.

2) Time dilation is real. There is no “Twin Paradox’ whatsoever; fast clocks are really slow. This is well known, but I review the physical reason why.

3) Length contraction is also real. Moving rods really shrink. It is not a question of fancy circular definitions (as some have had it). I show this below by an electromagnetic argument which makes the connection between relativistic transformation and Maxwell equation obvious.

4) A contradiction is derived. A particle would suffer gravitational collapse if its speed came close enough to the speed of light, as it would get confined within its Schwarzschild radius, should the equation of “Relativity” remain the same at all energies. A particle gun, at high enough energy, would become a black hole gun. That would violate all sorts of laws.

Thus “Relativity” breaks down. The simplest alternative is that some of the energy spills into acceleration (beyond c!) instead of mass acquisition.

5) Einstein’s conviction that Faster Than Light is equivalent to time travel is shown to be the result of superficial analysis. If the equations of relativity break down at high energies, they cannot be used to present us with negative time. Although I will not insist on this too heavily in this particular essay, Einstein got confused between a local notion (time) and a non local one (speed, and its parallel transport around loops).

As an humoristic aside, should high energy neutrinos go faster than light, it should be possible to measure time beyond the speed of light, using a high energy neutrino clock.

Thus a reassessment of “Relativity” is needed, starting with the name: if all the “Relative” laws blow up at high energies, that is, at high velocity, uniform motion is not relative, but absolute. The theory of Relativity ought to become the Theory of Velocity (because, at intermediate energies, all of the laws of the present “Theory of Relativity”, including fancy rotational additions of velocities, do still apply!) Mach’s principle, already absolute for rotational motion, and already favoring a class of uniform motion, would become absolute for any motion.

***

ABSOLUTE, UNIFORM MOTION IS DETECTABLE… IF ENERGETIC ENOUGH:

Poincare’ named the theory that he, Lorentz and a dozen other physicists invented, “Relativity“. Yes, Poincare’, not Einstein: this essay is about truth, not convention to please a few thousand physicists and a few billions imprinted on the Einstein cult. (And I like Einstein… When he is not insufferable.)

That name, “Relativity”, may have been a mistake. A better name, I would suggest, would be “THEORY OF VELOCITY“, for the following reasons:

In 1904, summarizing the experimental situation then, Henri Poincare’ generalized the Galilean relativity principle to all natural phenomena into as he wrote:

“The principle of relativity, according to which the laws of physical phenomena should be the same, whether to an observer fixed, or for an observer carried along in a uniform motion of translation, so that we have not and could not have any means of discovering whether or not we are carried along in such a motion”.

A first problem is that the American Edwin Hubble, and others, ten years after the precocious death of Poincare’, discovered cosmic expansion, which defines absolute rest. OK, Galileo would have said:

“Patrice, just don’t look outside, I told you to stay in your cabin, in the bowels of the ship.” Fair enough, but rather curious that inquiring minds ought to be blind.

However, fifty years after the death of Poincare’, the Cosmic Microwave Background (CMB) was discovered. That changed the game completely. You can stay in the bowels of the ship all you want, Galileo, when you move fast enough relative to the CMB, the wall of your cabin towards your uniform motion, v, will turn incandescent, and then into a plasma, as the CMB will turn into gamma rays, thanks to the Doppler shift. Even burying Galileo’s head in the sand will not work. Even a Galileo ostrich can’t escape a solid wall of gamma rays.

Relativity fanatics may insist that they are correct in first order, when they don’t go fast enough. OK, whatever: the equations of “Relativity” do not change shape with v, but, as I just said, the bowels of Galileo’s ship will always disintegrate, if the speed is high enough.

When equations don’t fit reality, you must them quit. That’s why it’s called science.

But let’s forget this glaring problem, as I said, I have found a much worse one. To understand it requires some background in so called “Relativity“, the theory of Gravitation (so called “General Relativity“, an even more insipid name), and Quantum physics.

We have to go through some preliminaries which show that time dilation and length contraction are real physical effects. There is nothing relative about them. So it’s not just the relativity of uniform motion which is not relative. It is the other two basic effects of relativity which are not relative either.

People can write all the fancy “relativistic” equations they want, a la Dirac, and evoke some spacetime mumbo jumbo . Those equations rest on, and depict, the three preceding relative effects, and if these are not relative at high energies anymore, one cannot use them at very high energies either. Paul Dirac can sing song that they are pretty all he wants, like a canary in a coal mine. Physics is not a fashion show. It’s about what’s really happening. If the canary is dead, it’s time to get out of there.

***

POINCARE’-LORENTZ LOCAL TIME (prior to 1902):

One central notion of standard relativity is “Local Time“, which Poincare’ named and extracted from the work of Lorentz (sometimes calling it “diminished time“). We have:

t’ = t multiplied by square root of (1- vv/cc)

[Because of problems with the Internet carrying squares and square roots properly, I write cc for the square of c, instead of c^2, as some do, etc… After all, it’s exactly what it is. In the end, mathematics is eased, and rendered powerful by abstraction, but it is all about words.]

So when v= 0, t’ = t and when v = c, t’ = 0, or, in other words, t’ stops. Here t’ is the time in the coordinate system F’ travelling at speed v relative to the coordinate system F, with its time t. More exactly t is what one could call “local electromagnetic time“. Some physicists would get irritated at that point, and snarl that there is nothing like “local electromagnetic time”. In science precision is important: we are more clever than chimps because we make more distinctions than chimps do.

How do we find t’ knowing t? We look at a light clock in F’ from F. If we look at a light clock perpendicular to v, in F’, from F, we see that light in F’ will have to cover more distance to hit the far mirror of the light clock. That clock will run slow. If we suppose there is only one measure of time in F’, that means time in F’ will run slow. (This unicity of time is a philosophical hypothesis, but it has been partly confirmed experimentally since.) This is what Poincare’ (also) called “diminished time”, in his 1902 recommendation of Hendrick Lorentz to the Nobel Prize in physics (for what Poincare’ called the Lorentz transformations).

The math to compute t’ from t use nothing harder than Pythagoras’ theorem. The idea is to compare two IDENTICAL light clocks, both perpendicular to v, one in F, standing still, the other in F’, moving along at v, and separated by some distance that light covers in time t. We look at the situation from F. When the F’ light has gone from one mirror to the other in the moving clock, it has covered ct’. Why that? Well we don’t know (yet!) what t’ is, but we know light is supposed to always (appear to) go at the same speed, the astronomers’ practice, as Poincare’ reiterated in 1898.

Meanwhile the origin where the light emanated from in F’ has moved by vt’. By Pythagoras:

(ct’)(ct’) = (vt’)(vt’) + (ct)(ct). This is the relation between t’ and t above.

***

FITZGERALD CONTRACTION IS A REAL PHYSICAL EFFECT:

The Irish physicist Fitzgerald suggested that, to explain the null result of the Michelson-Morley experiment, the arm of the instrument was shortened, as needed. What is the Michelson-Morley device? Basically two light clocks at right angles, one perpendicular to v, the other along v. These two arms allow to make light interfere, after it has gone back and forth either along the direction of v, or perpendicular to it.

No difference was found. The way I look at it, it shows that electromagnetic time is indifferent to direction. The way it was looked at then was that there was no “ether drag“.

What is going on physically is very simple: F’ moves relative to F. Light released at x = x’ = t = t’ = 0 is going to have to catch up with the other mirror. (notice that this is a slight abuse of notation, as the xs and ts are in different dimensions, and F and F’ in different coordinate systems…) Suppose v is very high. Seen from F, it is obvious that photons will take a very long time to catch up with the mirror at the end of the arm. Actually, if v was equal to the speed of light, it would never catch up (if someone looked in a mirror, when going at the speed of light, she would stop seeing herself).

Well, however, the M-M device showed no such effect. Thus the only alternative was that the length of the M-M interferometer shrank, as Fitzgerald proposed in 1892 (13 years before Einstein’s duplication).

Poincare’ introduced “Poincare’ stresses” to explain the effect as a real physical effect. That was explored further in 1911 by Lorentz, and then worked out in even more detail in the 1940s, using Dirac’s quantum electrodynamics.

The reason I am giving all these details is that Einstein could not understand Poincare’s insistence on “mechanical” models. That’s OK; not everybody can be super bright.

Einstein preferred the more formal insistence that the arm along v had to shrink, because c was constant, and that was it. This was exactly Fitzgerald’s initial reasoning, and it does not explain anything: true it seems necessary that the arm will shrink, but is it really happening, and if so, how? Einstein’s platitude about the mind of god, who he was most apt to seize, are just plain embarrassing… Especially as it turns out that, in this case, the one who got the idea was Poincare’. Maybe a god to Einstein, but to me, just a man. (All the more confusing as Einstein tried to refer, and defer, to Poincare’ less than justice required.) By clinging to Fitzgerald’s original vision like a rat to a reed in the middle of the ocean, Einstein aborted the debate with a hefty dose of superstition.

As the detailed development of Quantum Field Theory showed, the mechanical models were the way to go. They reveal a lot of otherwise unpredictable, unanalyzable complexities. Just as we are going to do below.

So what is happening with the relativistic contraction?

Maxwell equations tell us how the electromagnetic field behave. In ultra modern notations, they come down to dF =0, d*F = q (d being covariant differentiation). Very pretty.

Maxwell is not all, though. The Lorentz force equation tells us how particles move, when submitted to the electromagnetic field. It is:

Force = q(E + vB)

[E, B are vector fields, vB is the (vector) cross product of the particle velocity, the vector v, with B.]

Let’s suppose the particle moves at v. As it does, it will be reached simultaneously by the electromagnetic field from two different places. Say one of these field elements will be a retarded component, Fretarded, and the other is obtained from Pythagoras theorem, using the same sort of diagram used for a light clock. One can call that component Frelativistic. Frelativistic is proportional to the usual gamma factor of relativity, namely 1/sqrt(1-vv/cc). The total field incorporates Fretarded plus Frelativistic. The relativistic component basically crushes any particle sensitive to the Lorentz force in the direction of motion.

In particular, it will crush electronic orbitals. So atoms will get squeezed. That reasoning, by the way, explain directly, physically why the Lorentz transformations are the only ones to respect the Maxwell equations. It is better to achieve physical understanding rather than just formal understanding (by the way, professor Voigt found the formal argument for the Lorentz transformations in 1887, 18 years before Einstein).

How do formal relativists a la Einstein look at this? They look at it eerily, not to say… ethereally. Max Born, a Nobel Prize winner (for the statistical interpretation of the Quantum waves), a personal friend of Einstein expounds the formalism with coherently infuriating declarations around p 253 of his famous book “Einstein’s Theory of Relativity“.

“For if one and the same measuring rod…has a different length according to it being at rest in F, or moving relative to F, then, so these people say, there must be a cause for this change. But Einstein’s theory gives no cause; rather it states that the contraction occurs by itself, that is an accompanying circumstance to the fact of motion. In fact this objection is not justified. It is due to too limited view of the concept “change”. In itself such a concept has no meaning. It denotes nothing absolute, just as data denoting distances or times have no absolute significance. For we do not mean to say that a system which is moving uniformly in a straight line with respect to an inertial system F ‘undergoes a change’ although it actually changes its situation with respect to system F.

The standpoint of Einstein’s theory about contraction is as follows: a material rod is physically not a spatial thing, but a spacetime configuration.”

This sort of theology will remind some of Heidegger’s writings, when the pseudo philosopher looks for the “ground” and never finds it. Too much relativity will do that for you. Stay with confusion, end up with Auschwitz.

Could it be that, according to Born, a bird which flies by does not change, as it ‘actually changes its situation as a spacetime configuration’. Thus the bird at rest on its branch has not changed into a bird flying. Maybe Born should have received another Nobel for this other wonderful theory?

What Born forgets is this: 1) let’s suppose there is something as absolute rest (given, once again, by the reality of the CMB). 2) then a fast frame passing by has been colossally accelerated first before reaching that high relative speed when vv/cc approaches 1. So the fast frame has undergone an absolute change… And I explained what it is.

3) trying to play relative games between relatively relativistically moving frames does not wash, as there is privileged state of rest (or quasi rest: the Earth moves at 370 km/s relative to the CMB).

The derivation I sketched above provides with a cause for the change. It shows that, by reason of the uniform displacement, a real boost in the e-m field occurs which causes the contraction. So, basically it’s the electromagnetic geometry of uniform motion which causes the Lorentz transformations. It’s deep down not mysterious at all.

The reasons for real time dilation and real length contraction are plain, and absolute.

This is all about the geometry of electromagnetism. When Einstein wrote down in marble his formal considerations about relative this, relative that, a full generation would elapse before the discovery of the neutrino, which responds to weak interactions (OK, there is an electroweak theory, but we are not going to remake all of physics in this essay!)

By the way, I can address in passing another confusion: some will say that by standing on the surface of the Earth one undergoes an acceleration of one g (correct!), and thus why would an acceleration of one g in a straight line for a year (which is enough for high relativistic speeds) cause all these absolute changes I crow about?

The reason is simple: in one case no energy is stored, the acceleration is purely virtual, as the ground is in the way. In the other case, a tremendous amount of energy is concentrated piles up inside the moving body.

***

CLINCHER: YOU CAN’T ALWAYS SQUEEZE WHAT YOU WANT, BUT IF YOU TRY SOMETIMES, YOU GO FTL:

The Schwarzschild radius is given by R = 2GM/cc, where M is the mass of the body, G is the universal constant of gravitation, and c is the speed of light.

The energy of a particle is E = hf, where h is Planck’s constant, and f the frequency of its matter wave. Plug in Poincare’ mass-energy relation: E = M cc. Now E/cc is inertial mass M. Thus it causes, by the equivalence principle, the gravitational mass: hf/cc.

Thus the Schwarzschild radius of a particle of matter wave of frequency f is: R = 2Ghf/cccc.

But now comes the clincher: As the particle accelerates, at ever increasing speed v, its matter wave will shrink ever more, from (Fitzgerald) length contraction. At some point the wave will shrink within the Schwarzschild radius of the particle.

If one takes the gravitational collapse of the particle within itself at face value, the particle would exit physics, never to be seen again, but for its gravitational effect. That is obviously impossible.

So something has to give in the equation f = ma; here f is the force through which one pumps energy in the system (an electromagnetic field, or a muon jet, whatever), m is the relativistic mass, and a is the acceleration. What we saw is that m is bounded. Thus a has to augment, and the particle will accelerate through the speed of light. QED.

Some could object that I used the photon energy relation: E = Hf, instead of M = (Rest Mass)/ sq. root (1 – vv/cc). But that would not change the gist of the argument any.

[Neutrinos are suspected of having a non zero mass, because they oscillate between various states; but the mass is so small, it’s not known yet. Similarly the question of the photon rest mass is an experimental problem!]

Another objection could be that I used the Schwarzschild radius, but it does not apply to single particles. Indeed, the initial argument (Tolman Oppenheimer Volkoff), was that the gravitational force would overwhelm the nuclear force inside an extremely dense star. The nuclear force is repulsive at short distance.

[I tried to show a picture of the nuclear force, but I had to give up as the WordPress primitive system does not allow me to.]

A rough sketch of the force between two nucleons shows a strong repulsive peak, which is, however finite. The force depends upon gluon exchanges, is very spin dependent, etc… The idea of TOV was that gravitation would overwhelm it, and neuron degeneracy, under some circumstances. The basic ideea in this essay is the same, although it is the all too real Lorentz-Fitzgerald contraction, not gravity, which does the crunching.]

Previously Einstein had tried to demonstrate the Schwarzschild singularity had no physical meaning. His argument was contrived, erroneous. TOV succeeded to prove Einstein wrong (Hey, it can be done!)

Besides, all these subtleties can blown away by looking at particles the strong force is not applied to, such as photons or neutrinos. They both have mass, as far as creating geometry is concerned.

In the Schwarzschild computation, a term shows up, causing a singularity at a finite distance. The term is caused purely by the spherical coordinates, and the imposition of the vacuum Einstein gravitational equation. It is indifferent to kinetic effects (an important detail, as I put the Fitzgerald squeeze on). That term is identified to a mass for purely geometrical reasons. That mass will appear through Poincare’s E = mcc, or m = hf/cc, after plugging in the de Broglie relation.

This allows to circumvent Hawking style, trans-Planckian arguments (which, anyhow, Hawking superbly ignored in deriving Hawking radiation).

In any case f = square root [ccccc/2Gh]. Plugging in the numbers, one gets trouble when the frequency gets to ten to the power 43 or so. That’s about ten to the power 16 TeV, or 2 tons of TNT. About one million billion times more energetic than the CERN neutrinos. But then, of course, the effect would be progressive, somehow proportional to energy. (Also see Large Dimensions below.)

***

EINSTEIN GRANDFATHER OBJECTION SELF CONTRADICTING:

Conventional physicists will make the following meta objection to the preceding. According to lore of the standard theory of Special Relativity, the preceding scheme is completely impossible. Countless physicists would say that if one had a particle going faster than light, one could go back in time. Einstein said this, and everybody has been repeating it ever since, because Einstein looked so brainy.

Or maybe because he was also German. Thus he had got to have invented the Poincare’-Lorentz relativity! (We meet here again the Keynes-Hitler problem, that, in much of the Anglo-Saxon world, blind admiration for anything German, reigns sometimes to the point of spiting reason. I am myself fanatically pro-German, but there are lines of prejudice I will not cross).

OK, granted, there is some mathematical superficiality to support Einstein’s confusion between speed of light, time, and causality. Those mathematics are reproduced in the next chapter, where the mistake is exposed.

There are three problems with using the Grandfather Paradox to shoot down my reason for FTL.

The first objection is that Einstein’s General relativity itself makes possible The “Grandfather Paradox“. So it is rather hypocritical to use it to fend off contradictions of (Special) Relativity.

That grandfather paradox increasingly haunts standard fundamental physics. The paradox arises from “Closed Timelike Curves“, which appear in (standard) Relativity (not in my version of Relativity). Hawking was reduced around 1992 to the rather ridiculous subterfuge of a “Time Protection Conjecture“.

The second objection to the objection is that, in practice, experimentally, the objection has proven irrelevant, by years of increasingly precise experiments. Basically what happens is that Quantum theory allows Faster Than Light teleportation of some sort (“states“, as the saying goes). And experiments are confirming this, leading to claims that time travel has been achieved, experimentally. There are many references, here I give one from July 2011, one of the authors, professor Lloyd from MIT is a Principal Investigator, on just this sort of problems.

Personally I have no problem with the results: they fit my vision of things, which is very friendly to Faster Than Light. But I have a problem with the standard semantics of “time travel“, which comes straight from the way Einstein looked at time.

Einstein was, in my opinion, very confused about time. He notoriously said: “time is nothing but a stubbornly persistent illusion“. In my vision of physics, time is fundamental, and it has nothing to do with space. I believe the notion of spacetime applies well to gravitation, at great distances, such as Earth orbit. I used above one of the staples of “General Relativity”, the Schwarzschild radius, but very carefully, from first principles. When people argue “time travel“, they argue from later principles, later day saints, so to speak. They confuse cornucopia and utopia.

I believe this: Time is the measure of the change of the universe. It’s not subject to travelling. But it is subject to confusion, and Einstein is the latter’s prophet.

(I also believe that the second Law of Thermodynamics applies even at the subquantal level (the subquantal level is what those who study entanglement, non locality, in Quantum physics, such as the authors I just quoted, tangle with). OK, the latter point is beyond this essay, the main theme of which does not use it at all.)

***

THE EINSTEIN GRANDFATHER ERROR:

The third objection to using the grandfather paradox to contradict me is much more drastic, and revealed by my own pencil and paper (the following is just an abstract of my reasoning). The conventional reasoning due to Einstein is faulty (and faulty in several ways).

Most specialists of relativity subscribe to statements such as “it should be possible to transmit Faster Than Light signals into the past by placing the apparatus in a fast-moving frame of reference“.

Einstein’s argument rests on equations for time such as t’ = (t – xw/cc)/square root of (1- vv/cc).

In this v is supposed to be the speed of a moving frame, and w the speed of an alleged Faster Than Light signal. One sees that, given a w > c, there is a v close enough to, but inferior to, c, such that t’ becomes negative.

t’ is time. So what we have, given a Faster Than Light signal w, frames moving with enough of a speed v in which events seem to be running in reverse. Thus Very Serious Professors have argued that the putative existence of that FTL w reverses causality. They abbreviate this by saying that time travel a consequence of FTL. And what do I say to this howling of the Beotians? Not so fast.

Indeed, this ought to be physics, not just vulgar mathematics. One argument was overlooked by Einstein, who apparently believed blindly in Poincare’s Relativity Postulate even more than his author himself did.

We have discovered above that there is a limit energy, beyond which Relativity breaks down. Thus the Lorentz transformations break down.

Let’s look at the situation in a more refined way. What happens to the traditional transverse light clock encountered above, and in all traditional Relativity treatises? Well, in this thought experiment, admitting my reasoning above as valid, the light clock, if made energetic enough, will start to accelerate faster than light. So it would stop functioning, because light will not be able to catch up with it. This may sound strange, but it’s not. In the mood of the preceding, it just says that the light used is limited in energy, and the clock is not. To still keep time for a while, we may have to use a high energy neutrino clock (supposing that neutrinos, indeed, travel Faster Than Light; a neutrino clock is build exactly like a photon clock, with the photons replaced by neutrinos).

This is why the Lorentz transformation fail; because the clock fail, as the light cannot catch up with the mirror. Pernicious ones could claim I self contradict, as my argument used the Fitzgerald contraction. Yes, I used it, because it failed. In my logic, the contraction fails first.

Another argument is that the alledged violations of causality hard core relativists find in the apparition of negative times are much less frequent than they fear, because time dilation dilues the statistics. A relativistic point they absolutely ignore. Also, as I have argued in the past, in https://patriceayme.wordpress.com/2011/09/01/quantum-non-locality/, Quantum physics non locality, in conjonction with Faster Than Light space expansion implies violations of local causality. Basically Quantum entanglements can happen beyond the space horizon given by the cosmic FTL expansion (which is an experimental fact).

Thus causality has to be taken with a grain of salt, and plenty of statistics (a characteristic of modern physics, at least since Boltzman).

***

AND LET’S NOT FORGET FASTER THAN LIGHT FROM THE QUANTUM…

In physics, these days, a thousand theories blossom, and nearly a thousand fail miserably. So, on the odds, it ought to be the case with the preceding theory. However the preceding rests on fundamentals, whereas much of the fashionable stuff rests on notions nobody understands, and few bothered to study (such as the interdiction of FTL, which rests just on the shallow logic of Einstein exposed above).

An interesting sort are the Large Extra Dimension theories (pertly instigated and made popular by the famous Lisa Randall, a glamorous professor from Princeton, Harvard and Solvay, author of the just published “Knocking On Heavens’ Doors”). They would help the preceding arguments, by lowering considerably the threshold of relativity’s high energy failure. Thus, if superluminal neutrinos are indeed observed, in light of the preceding, they would suggest the existence of Large Extra Dimensions.

Many of the theories which have blossomed are “not even wrong“. The obsession with superstrings was typical. Why to obsess with those, when basic Quantum theory had not been figured out? Is it because superstrings cannot be tested?

Basic Quantum theory is subject to experiments, and some have given spectacular, albeit extremely controversial results. There was curiously little interest to confront those tests. If nothing else, the OPERA experiment illustrates that the failure of Relativity at high energy can be tested (OPERA was really out to test neutrino oscillations, which are, already, a violation of Relativity in some sense, as they confer a (rest?) mass on a particle going at the speed of light!)

The preceding theory, and its absolute frame, fits like a glove with the idea with an absolute (but stochastic and statistical) space, constructed from Quantum Interaction (or “potential” as Bohm has it). That would partly cause the CMB. That theory rests on the predicted failure of standard Quantum theory at large distances, and the existence of an extremely Faster Than Light interaction. It’s getting to be a small world, theoretically speaking…

***

Patrice Ayme

***

P/S: The reasoning above is so simple that normal physicists will not rest before they can brandish a mistake. So what could that mistake be? Oh, simply that the perpetrator, yours truly, did not use full Relativistic Quantum Mechanics, obviously because s/he is ignorant. But referring to Relativistic Quantum Mechanics, by itself, would use in the proof what one wants to demonstrate with the proof. Relativistic Quantum Mechanics assumes that Relativity is correct at any speed. I don’t. I don’t, especially after looking outside.

OK, that was a meta argument. Can I build a more pointed objection? After all, Dirac got QED started on esthetic grounds, while elevating relativity to a metaprinciple. But beauty is no proof. Nor is the fact that Dirac’s point of view led to several predictions (Dirac equation for electrons, Spin, Positrons).

One could say that the Heisenberg Uncertainty Principle (HUP) would prevent the confinement of a particle in such an increasingly small box. However, the HUP is infeodated to general De Broglie Mechanics (GDM), and there is no argument I see why GDM could not get confined..

Share this: Please do share, ideas are made to spread and enlighten!